When engineering gets 100% meta-rational

Demand for meta-rational competence is about to explode. Don't miss the boat

The sudden forward jerk 𐡸 When meta-rationality is the only job left 𐡸 Can AI do meta-rationality too? 𐡸 Talking my book

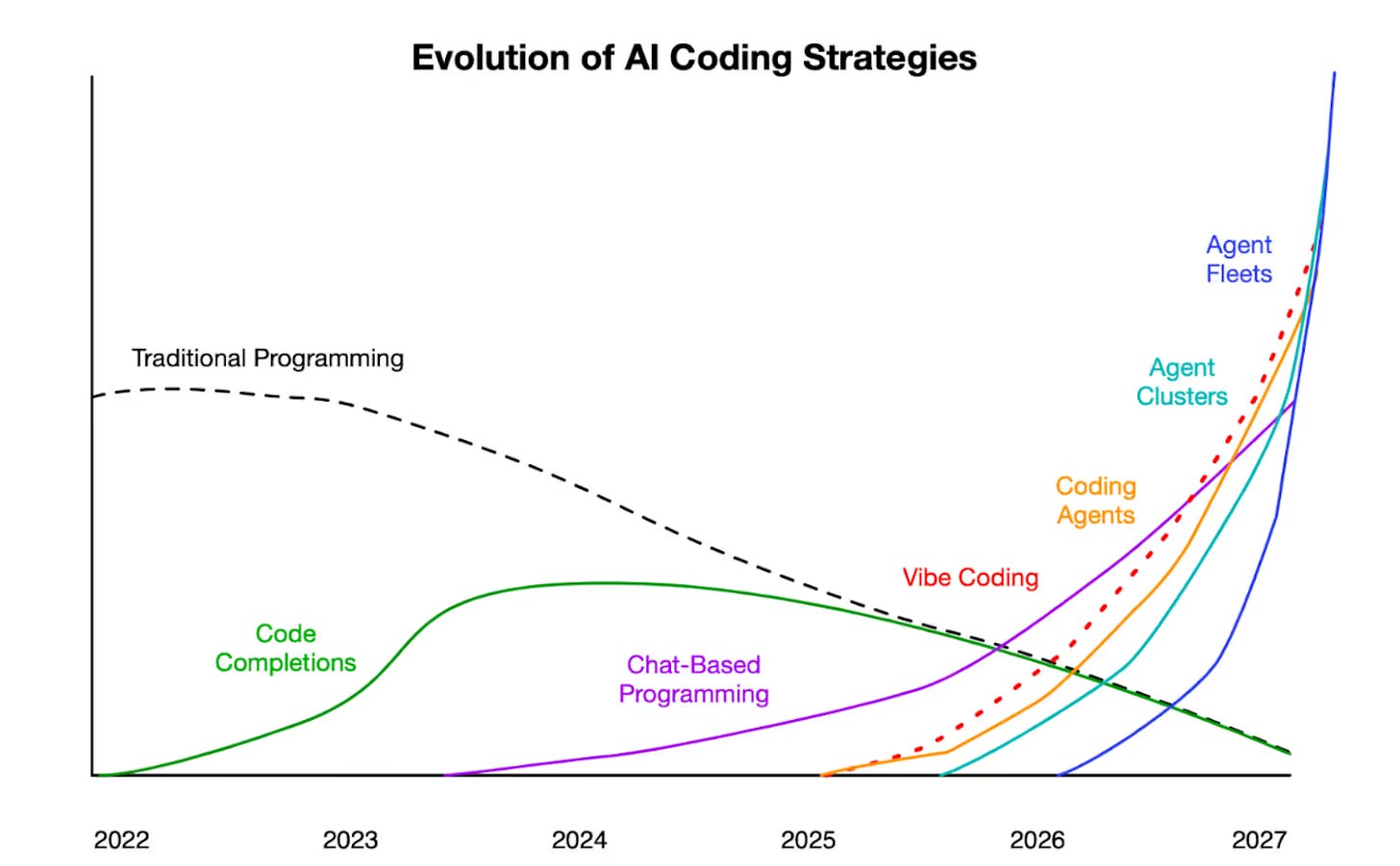

AI obsoleted software engineering three months ago. Routine rational programming no longer requires humans.

You can now tell “coding agents” what to build, and give them some general guidance about how, and they’ll do the whole thing by themselves. Sometimes they screw up, but often they write a complex app from scratch and get it right.

Or! That’s what many people with relevant expertise say. Others say this as the usual AI hype.1 My guess is that the claim is somewhat exaggerated, but moderately true now,2 and likely mostly-true fairly soon.

Late last year, some serious engineers stopped writing code entirely. Instead, they just tell AI systems what needs to get written. Some say they no longer read that code, even. The agents are reliable enough there’s no need to check their work.

Software engineering has been the most economically valuable rational work for decades. The coding part of that, at least, may be about over.

I said that coding agents need you to tell them what to build (“requirements analysis”), and the big picture of how (“architecting”). Those are the parts of the process that still require human judgement. They are meta-rational parts of software development!

The meta-rational aspects of software development are its most economically valuable—although that’s often not understood. Giant software development projects, with hundreds of millions of dollars invested, often fail due to missing or incompetent meta-rationality.

Varun Godbole and Dan Hunt explain why “AI-first” software development especially requires meta-rationality:

We’ve come to see the pursuit of value creation with AI as the cultivation of capacity for nebulosity. Traditional software development afforded a sense of certainty in the “how”, even if the “why/what” were deeply uncertain. AI-first work is fundamentally different. It forces teams to confront the nebulosity of the “why” and “what” in a comparatively confronting way. This creates a specific kind of pressure. Individuals have to accept that while certainty is impossible, you can develop the skills and discernment to confidently navigate product development faster. Not because you eventually achieve certainty. But because you get better at navigating without it.3

I’ll next summarize briefly a series of posts I’ve written about meta-rationality in software development. (They start here.)

What is needed? Software purposes

A system that does something different from what was needed is useless, no matter how well it works technically. “What is needed?” is a question of purpose.

Rationality (problem solving) deliberately excludes consideration of purposes. It just takes the problem statement as a given, without considering whether it is sensible in context. Rational requirements analysis methods usually miss the mark:

It’s common for software projects to deliver something that more-or-less matches what the customers said they wanted, but is nothing like what they imagined. Expensive and useless, like an ornate Victorian treehouse.4

Purposes are central for meta-rationality:

The essence of meta-rationality is actually caring for the concrete situation, including all its context, complexity, and nebulosity, with its purposes, participants, and paraphernalia.5

How should we go about this? Software architecture

Software projects also often fail because overall design for the system—its architecture—was a bad fit for the job.

Architectural choices depend on unboundedly complex, nebulous aspects of the context. That includes participants such as customers, users, managers of users, and regulators. It includes hardware and software paraphernalia, such as connected devices, software libraries, and external databases.

Rationality critically depends on excluding nebulosity, and excluding considerations of context. That leads to making bad architectural choices early in a software project. Those can commit the team essentially permanently; changing architecture is enormously expensive, not usually attempted, and rarely successful.

Bad architecture is common because skilled architecting requires meta-rational skills that aren’t taught and that most people don’t realize exist. There is no formal qualification for the job, but also many of those who do it aren’t functionally qualified either.6

The value of software meta-rationality is about to be revalued

Upward. Drastically. I suspect!

Some organizations recognize the value of meta-rationality, and support and reward it. Most do not.

In current software development organizations, meta-rationality is typically invisible, denigrated, neglected, or overcontrolled.

Clueful technical leaders recognize the cost of this neglect. If no one else does the job, they may reluctantly take responsibility, for the sake of the overall success of their team; even knowing their careers may suffer. You can read rueful discussions of this sacrifice, and ways to minimize its cost, on their blogs.7

If AI can do all the rational work, everything that’s left for humans must be meta-rational, by definition. Then meta-rational developers will be the whole software job market.

Meta-rational competence is rare.

If one meta-rational developer, plus a swarm of coding agents, can replace an entire team, they will be extraordinarily valuable. And (because they are rare) they can probably capture a significant chunk of that value.

Boris Cherny leads the development of Claude Code, currently the most powerful autonomous code-writing system. In a recent podcast about how those will transform the job market, he said:

Every founder and hiring manager I’ve been speaking with these days is feeling the same pressure. Hire the best people as fast as possible, but recruiting is time-consuming, alignment is hard, and competition for great talent keeps getting tighter.8

A point I’ve made repeatedly is that the standard departmental siloing of software-related disciplines is deeply dysfunctional.9 Meta-rationality characteristically ignores field boundaries.

I suspect software development will increasingly require single individuals to combine:

Product management: figuring out what people need software to do; specifying those purposes in technical-enough terms that artificial coding agents can implement a solution; checking with the people who use it that it does do what they need; revising specifications if it doesn’t.

System architecting: choosing a general strategy for implementation, and the particular framework technologies within which it will operate.

Project management: dividing up the programming work into chunks small enough that fleets of coding agents can complete them reliably; revising the task decomposition when they fail.

Boris Cherny says:

I don’t have to do the tedious work anymore of coding. The fun part is figuring out what to build. It’s talking to users. It’s thinking about these big systems. It’s thinking about the future. It’s collaborating with other people on the team, and that’s what I get to do more of now.

His advice to software professionals:

Try to be a generalist more than you have in the past. In school, a lot of people that study CS, they learn to code, and they don’t really learn much else. But some of the most effective engineers that I work with every day, and some of the most effective product managers and so on, they cross over disciplines.

Some of the strongest engineers are hybrid, product engineers with really great design sense. Or an engineer that has a really good sense of the business, and can use that to figure out what to do next, or an engineer that also loves talking to users and can just really channel what users want to figure out what’s next.

So, I think a lot of the people that will be rewarded the most over the next few years they won’t just be AI native, and they don’t just know how to use these tools really well, but also they’re curious and they’re generalists, and they cross over multiple disciplines and can think about the broader problem they’re solving rather than just the engineering part of it.

I describe this as fluid competence in “Sorcery”:

Fluid competence is general competence, meaning potentially competent enough in any domain to provide “what the situation needs.” It doesn’t mean you are expert at everything; no one can be. It’s based on a realistic assessment of what you can do now, and the minimum you need to learn to act outside your areas of expertise, well enough to address the current situation.

Fluid competence is a direct consequence of prioritizing meta-rational norms over rational, systematic ones.

I’ve sketched the way to develop this general competence in “How to be omniscent.”

Restarting the meta-rationality project? Talking my book

Two years ago, I started publishing draft sections of the Part of my book Meta-rationality that is actually about meta-rationality. (The first three Parts provide background understanding.)

One year ago, I abandoned it, part way in. I realized that it was a much larger project than I had expected. Plus, the explanatory structure was wrong, and there was less interest than I had expected.

Now I’m thinking it may be time to start again! If meta-rationality becomes the critical ingredient for success in the world of 2026, in software and possibly other fields, explaining it is important.

In investment finance, “talking your book” means hyping a future possibility which would benefit you financially if other people believed it was going to happen. Because they’d make it happen by acting on their belief.

Here I’m talking my book—specifically, my meta-rationality book! I want meta-rationality to be better understood and applied more widely. And I do think it’s reasonably likely that software meta-rationality will soon be much more highly valued, and better compensated, than it has been. And if you believe that, you may be motivated in that direction.

One problem with what I’ve published so far is that it starts with very abstract, dry generalities, and works gradually toward specifics. I had intended to use software development as just one source of concrete examples.

Maybe the structure should be inverted! It could start with lots of examples from software development, and work up the abstraction hierarchy to general lessons that could be applied across many fields. That would limit its appeal to other readers, but make it more accessible for people in tech.

I do have unpublished year-old drafts of additional explanations of software applications. But, I have no experience with cutting-edge coding agents. I can’t explain how to use those. (Yet, anyway.) I can explain what you need to do before and around using them: the aspects of software development that are not about coding. Those probably won’t change soon.

What do you think? Is this something you’d be excited to read?

But can’t AI do the meta-rational part too?

If AI systems will be able do all the meta-rational work as well, then explaining it to humans now might be pointless. So: will they?

Maybe? Probably? Eventually? I don’t know! How could anyone know?

(I have a PhD in AI, and I mostly find it baffling. What I find most baffling is that everyone from hair stylists to AI lab CEOs are extremely confident in their opinions about the future of AI. These opinions, including those of supposed experts as far as I can tell, are based solely on feels. There’s nearly no meaningful evidence or reasoning involved.)

I’d say first that meta-rationality is not some metaphysically special capacity that only humans can have. (Arguments that “AI can never do X” usually rely on this delusion.) It’s just acting on what situations need, while taking context, purposes, and rationality into non-rational consideration. I am confident there’s no in-principle reason computers can’t do that.

Artificial meta-rationality requires access to context and purposes in a form the system can process. Currently, that mainly means text. Much of a situation’s context and purposes can be described in text. Ryan Lopopolo of OpenAI highlights the necessity of doing so:

Our human engineers’ goal was making it possible for an agent to reason about the full business domain directly from the repository itself. Knowledge that lives in Google Docs, chat threads, or people’s heads are not accessible to the system. We learned that we needed to push more and more context into the repo over time. That Slack discussion that aligned the team on an architectural pattern? If it isn’t discoverable to the agent, it’s illegible in the same way it would be unknown to a new hire joining three months later.

Meta-rational judgement is non-rational, but not irrational. It’s often described as “taste” or “intuition” (although those terms can be misleading). A couple weeks ago, Matt Shumer wrote in “Something Big Is Happening”:

The most recent AI models make decisions that feel like judgment. They show something that looked like taste: an intuitive sense of what the right call was, not just the technically correct one.

Lopopolo writes:

Human taste is fed back into the system continuously. Review comments, refactoring pull requests, and user-facing bugs are captured as documentation updates or encoded directly into tooling.

Situated, embodied, interactive human experience underlies much of context and purposes and judgement. Much of that probably can’t be captured in text. Not practically, anyway. It may be possible to make it available in other ways. I don’t think this is an in-principle limitation. I do suspect that it will put practical limits on artificial meta-rationality for quite some time.

Meta-rational competence is partly portable

For many reasons, software development is a domain uniquely suitable for automation. One is that it’s mostly rational. Extreme proponents of AI, and extreme opponents, both predict that all other rational domains will also soon get automated. Some say that will eliminate most office jobs as soon as the end of this year (2026). I’m skeptical. For many reasons, I think other domains are less amenable than software development.

I also think the meta-rational aspects of most domains are more nebulous than those of software development. Even if rational, routine work in law or medicine gets automated soon, meta-rational work there will need humans for much longer.

Among all rational work domains, software may also be the one in which meta-rationality is most important, and so has been best understood. Working in the field may give you a significant head start elsewhere.

Meta-rational skills are rare and valuable everywhere. Rarer than in software development, in fact! Fortunately, they are partly transferable across domains, because fluid competence is general competence. Plus, meta-rational “sorcery” gives you the ability to learn the necessary bits of new fields quickly.

Both your skill at managing AI agents, and your meta-rationality skills, may give you a startlingly sharp edge in a possible upcoming nearly-no-jobs future. When and if humans are no longer needed in software development, you may still do well in some unrelated field.

For a couple years, I dismissed widespread claims that AI coding assistants made developers 10x productive. I was right. It wasn’t true. AI didn’t provide a meaningful speed-up. This time seems different. I may be wrong. Many people with relevant expertise say current coding agents are still so unreliable they’re useless.

For example, see the extensive Hacker News discussion of Anthropic’s “Building a C compiler with a team of parallel Claudes.” This was an impressive demonstration! C compilers are gigantic programs, and full of annoying fiddly stuff that’s unusually difficult for humans to write. On the other hand, in some ways compilers are atypically easy for coding agents. There are several open-source examples. Extensive documentation explains in detail how to write them (for example, textbooks), and what goes wrong when you try (for example, bug tracker databases and the detailed public discussions among gcc developers). Gigantic test suites include nearly full coverage for every failure mode. Presumably all these are in the training data, so the task is “in distribution.” That is: so well understood and documented that cleverness is unnecessary. It’s just masses of routine programming.

Quote edited fairly extensively for relevance; I hope without altering the authors’ meaning.

Quoted from “Analyzing requirements rationally.”

This is the definition at the beginning of my explanation of meta-rationality.

Quoted from “Software architecting is often neglected.”

Quotes from Boris Cherny’s podcast are lightly edited for clarity and concision.

Please please resume the meta-rationality book! That book is so dear to me. I am so glad that I found it when I experienced meaning crisis almost nine years ago.

As a software engineer, I feel lucky that you are considering adding more examples from software engineering. I love the idea whole-heartedly!

Yes! I'd be very excited to read about more explanations and examples of meta-rational software development!